Article's Content

For twenty years, SEO meant one thing: rank higher on Google. Build backlinks, optimize headers, speed up your server, and watch the traffic roll in. The playbook was clear, the metrics were familiar, and everyone knew the rules.

GEO changes the rules:

Your brand’s visibility in AI search will define your market position for the next decade.

Your buyers aren’t starting with a list of blue links anymore. They’re starting it in a conversation. A recommendation. A summary generated by ChatGPT, Perplexity, Gemini, or Google’s AI Overviews.

And in that conversation, your brand either shows up in the results, or it’s not part of the buying decision.

Generative Engine optimization (GEO) is how you respond to that shift and make sure your brand is in the answers.

We’ve been studying that shift at Foundation, tracking how and why certain brands get cited while others disappear, and applying our learnings from day one.

This guide breaks down everything we know about GEO, from the research that defines it, to the mechanics that power it, to the strategy that makes it work. Whether you’re just hearing about GEO for the first time or you’re already tracking Share of Model across platforms, this is the resource we wish existed when we started this work.

What Is Generative Engine Optimization?

Generative Engine Optimization is how you optimize your brand’s visibility within AI-generated answers across ChatGPT, Perplexity, Google AI Overviews, Gemini, and Claude.

When a VP of Marketing asks ChatGPT, “What are the best account-based marketing platforms for mid-market SaaS?”, the AI synthesizes information from dozens of sources and delivers a direct, cited response. GEO is how your brand ends up in the AI’s preferred sources, and what those sources say about you.

What the Academic Research Says

A seminal research paper from Princeton and IIT Dehli, published in 2024, introduced Generative Engine Optimization as a formal concept for the first time. The researchers defined GEO as a framework for helping content creators improve their visibility within generative engine responses, a fundamentally different challenge from traditional search engine optimization.

The core insight is that generative engines don’t simply rank websites in a list. They synthesize information from multiple sources into a single, structured response, embedding citations at varying positions and with varying levels of influence. That makes visibility far harder to define and measure than it was in the era of blue links.

The paper also proposed new metrics to capture this complexity, including position-adjusted word count and a subjective impression score that accounts for factors like a citation’s relevance, influence, and uniqueness within the generated response.

Fast forward nearly two years, a lot has changed about our understanding of GEO. But the playbook is getting clearer.

Understanding the SEO vs. GEO debate

The emergence of AI search and the never-ending quest to optimize for new channels have sparked a fierce SEO vs. GEO debate over whether the latter is actually distinct from the former in any meaningful way.

To be clear: search engine optimization is still critical to modern marketing efforts. Search engines have shaped how people find and retrieve information online for over 3 decades, and now the leading search engine company, Google, has entered the AI search space. But, while SEO is fundamental to good GEO, it’s only part of the story.

Not only do LLMs cite content based on their own training data, but they also pull from a variety of platforms and popular websites, including: Reddit, YouTube, Wikipedia, G2, and LinkedIn. All this means more factors influence what a generative engine includes in its output, you can’t just rely on your web domain.

Here’s the simplest way to think about the relationship between SEO and GEO:

| Search Engine Optimization | Generative Engine Optimization |

| Optimize for rankings | Optimize for mentions and citations |

| Click volume | Influence on answers |

| Keyword positions | Brand mentions |

| Your domain only | Multi-platform presence |

How much do AI results and search results actually overlap?

High-ranking content on Google is frequently used by LLMs, but not consistently and not always in the ways you’d expect. Research over the last year shows constant change in the overlap between the top 10 SERP results and AI responses when focusing on the same keyword:

- Only about 10% of AI Mode citations match Google’s organic results (Moz).

- ChatGPT’s chosen sources overlap with Google 39% of the time (Profound).

- Just 12% of URLs cited by LLMs rank in Google’s top 10 for the original prompt (ahrefs).

So SEO performance alone doesn’t determine whether you show up in AI-generated answers.

GEO adds a new layer of visibility, one that depends on citation share, mention rate, sentiment, and your presence across the broader information ecosystem.

As our VP of Strategy, James Scherer, put it in his musings on GEO: “SEO is your space — your website, blog, technical optimization. GEO is all that stuff plus external influences. We don’t really optimize for generative engines; we influence them.”

SEO gives you a foundation. GEO pushes you beyond the confines of your website, into every source AI uses to learn about your category.

To execute on that, you need to understand why this shift is happening now.

Why GEO Matters Now

55% of enterprise buyers use AI to begin their search. Nearly half use it for market research. This shift isn’t emerging. It’s already shaping how decisions get made.

Here’s what makes this moment different from the early days of SEO or social media marketing: the window for first-mover advantage is narrower, the cost of inaction is higher, and the signals that matter are almost entirely invisible to traditional analytics.

Buyers Don’t Search the Same Way Anymore (Especially in B2B)

A Head of Revenue Operations opens Perplexity, not Google, and types: “Our sales team is spending too much time on manual forecasting. What tools can automate pipeline forecasting for mid-size SaaS companies?”

At this point, she’s not sure whether this is a CRM feature, a standalone tool, or a broader RevOps platform play. She’s trying to understand the landscape. The AI’s response will shape which categories she explores, which vendors she evaluates, and which competitors she never even considers.

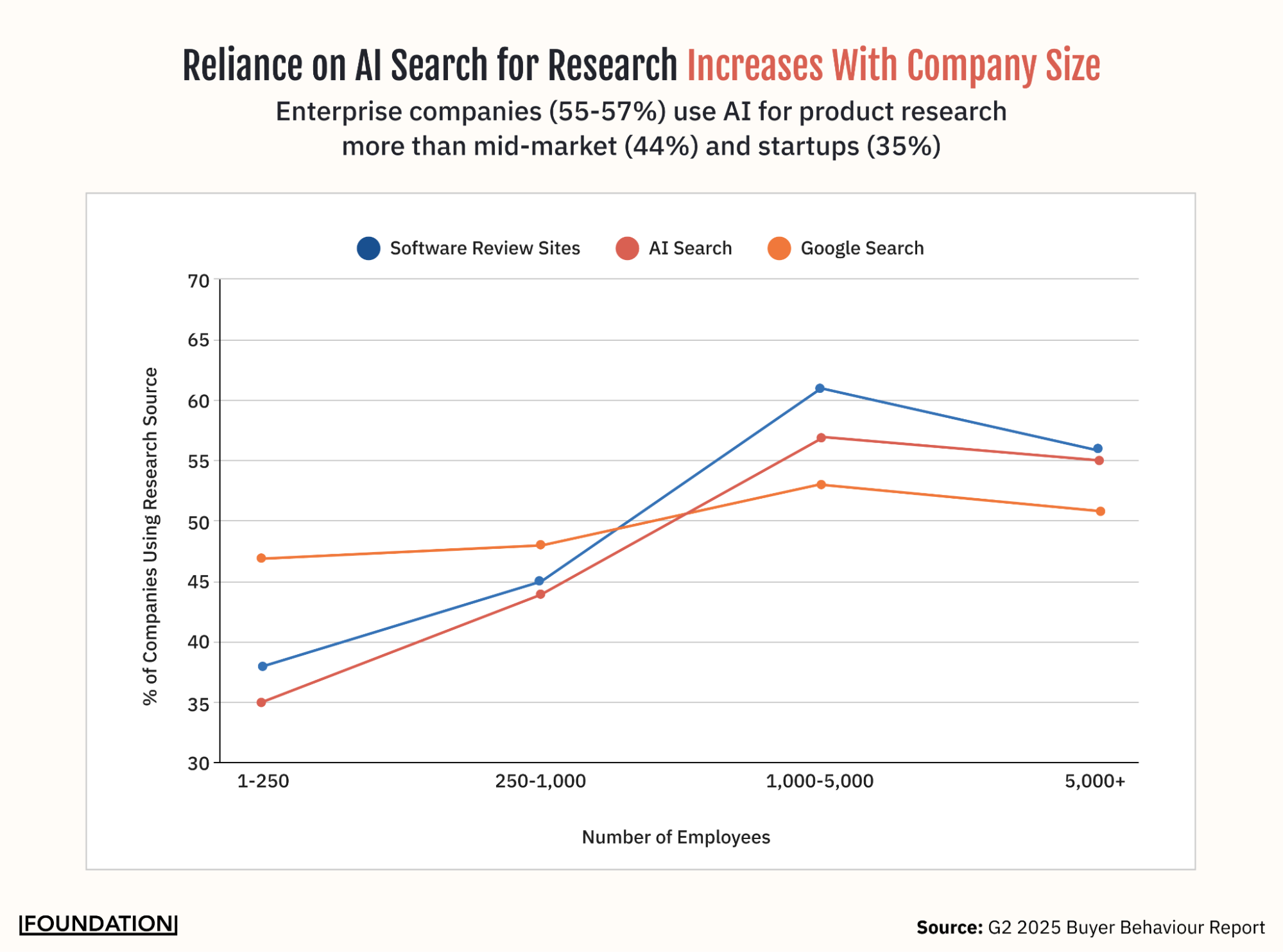

This is happening at scale. According to G2, 30% of all buyers now start with AI before Google. Nearly half (47%) use AI for market research. And the bigger the company, the more likely it is: 55–57% of enterprises with 1,000+ employees use AI tools for product research.

In traditional search, a buyer moves through the funnel incrementally — a top-of-funnel search, then a middle-of-funnel comparison, then a bottom-of-funnel evaluation. Each step takes days or weeks. AI compresses the buyer’s journey from weeks to minutes.

With an LLM, that journey happens in one interaction. The buyer asks a question, refines it, compares options, and reaches a decision without leaving the conversation.

When someone finally clicks through to your site, they’re past browsing. They’re evaluating.

AI traffic conversion data from Ahrefs supports this.

ChatGPT drives only 0.5% of the visits, a rounding error, you might say. But that tiny sliver accounts for 12.1% of all new signups. That’s a conversion rate roughly 24 times higher than the average traffic source. It changes the economics of visibility.

Organic Search Drives Volume, But AI Search Decides Who Gets Considered

Before you redirect your entire budget, Google still accounts for 73.7% of all desktop searches, while ChatGPT sits at just 3.2%. But AI is reshaping Google itself, roughly 16% of search results now show AI Overviews, making Google by far the largest AI search tool.

That matters for one specific reason: when a buyer clicks through to a source that’s been cited in an AI Overview, they arrive with far more context and intent than a typical organic visitor. The AI has already framed the category, defined the criteria, and surfaced your brand as relevant. That pre-qualification is why AI-cited traffic converts at dramatically higher rates. The goal, then, is to be the brand AI cites in the first place.

Which brings us to where the real competitive stakes lie. 6sense data finds that 95% of buyers purchase from their Day One shortlist — the set of vendors they’re already considering before they fill out a form or talk to a sales rep. The first vendor to make contact wins the deal 80% of the time.

AI sits upstream of that shortlist. It shapes how buyers understand a category before any trackable signal exists, and it frames which vendors belong in the conversation before a single website visit. If you’re not part of how AI explains your category, you may never make the Day One shortlist. No amount of retargeting will fix that.

AI Search Is Creating Winners. The Window to Become One Is Now.

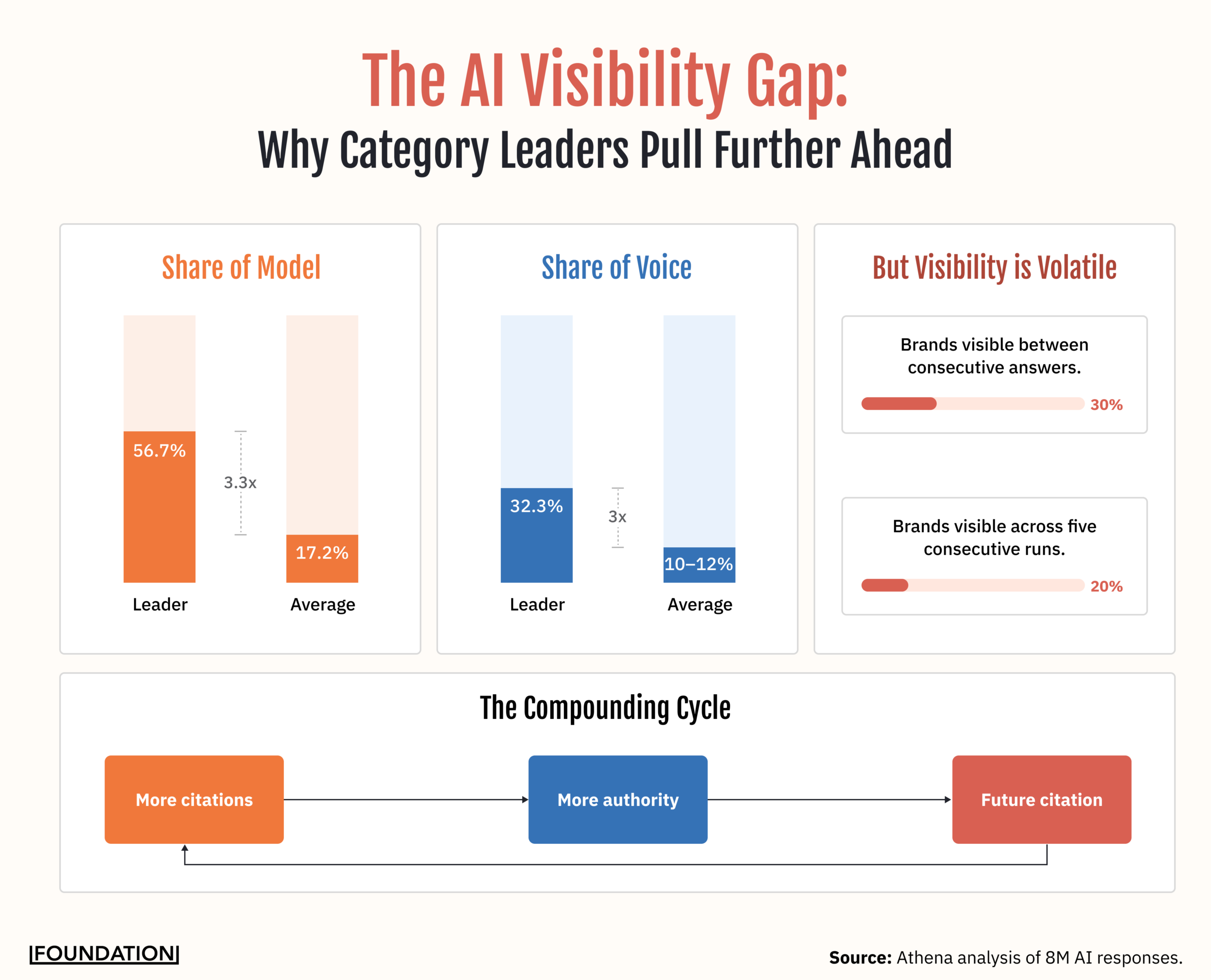

Analysis from Athena across 8 million AI responses reveals that the average brand appears in just 17.2% of relevant AI responses. The top brand in a given category? 56.7%. That’s more than three times the average.

Share of voice follows the same pattern: the leading brand captures 32.3% while the average competitor sits around 10–12%.

This creates a compounding effect. Citations build authority, and authority drives further visibility. Early movers who establish an AI presence are hard to displace.

But here’s the nuance that makes this even more interesting. Visibility in AI search is inherently volatile. AirOps research found that only 30% of brands stay visible between consecutive answers. Just 20% remain present across five consecutive runs. The models rebalance for diversity, freshness, and coverage, and build answers from scratch each time.

What we’re seeing is that the brands with the most consistent visibility are the ones earning both mentions and citations (a pattern we’ll break down in the Measuring GEO Performance section).

The data makes one thing clear: AI visibility isn’t distributed fairly, and it isn’t stable. But it is earnable. If you understand how generative engines actually build their answers. That’s where strategy begins.

How Generative Engine Optimization Works

LLMs don’t process information the way Google’s crawler does. Instead of matching keywords to pages, they synthesize information from dozens of sources. They evaluate authority and recency, and construct responses from scratch every time someone asks a question.

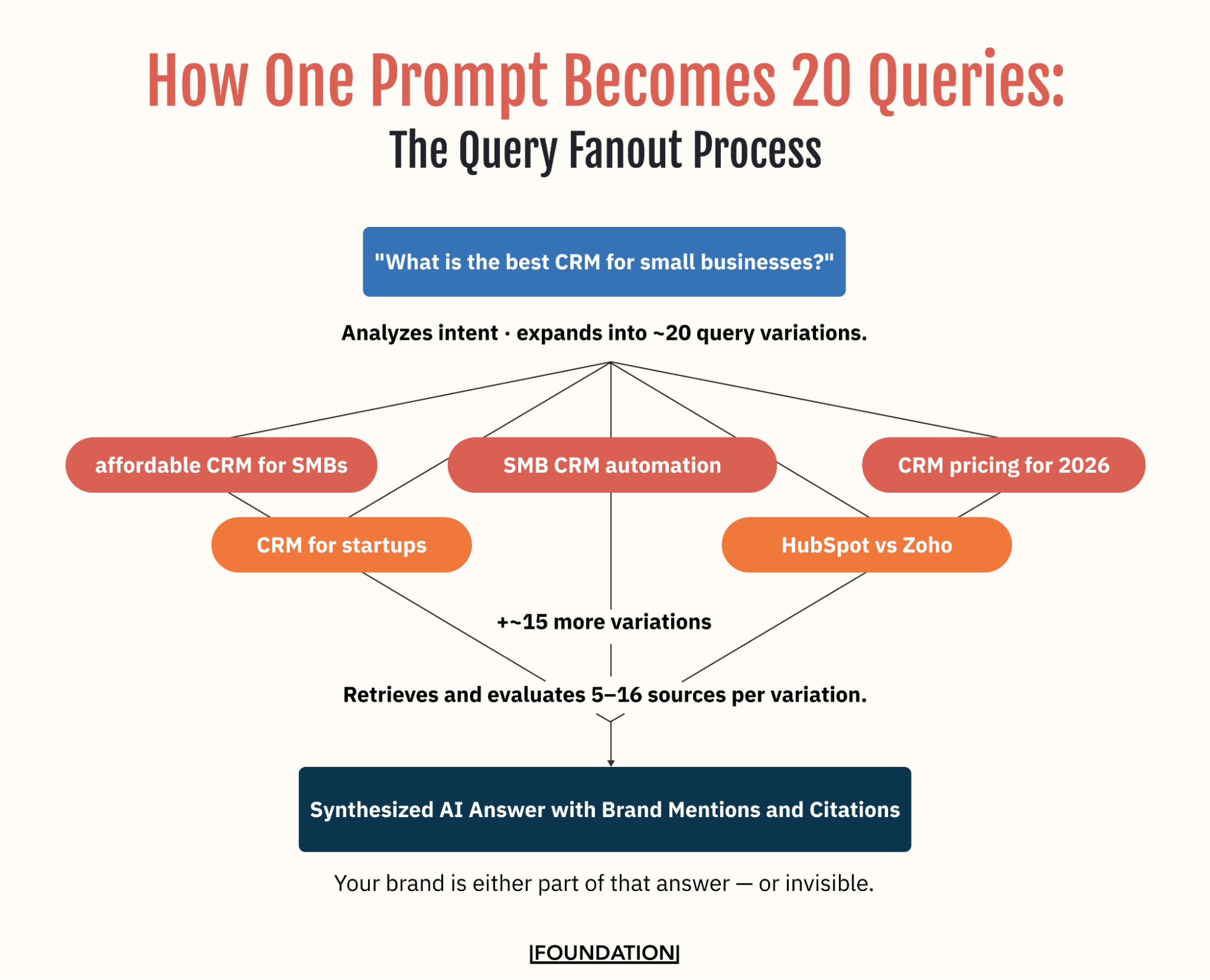

The Query Fanout Process

When someone types a question into ChatGPT or another LLM, the model doesn’t just process that single query. It runs a multi-step process:

First, it analyzes intent: Is this informational, comparative, or transactional?

Then it expands the query into roughly 20 variations.

A prompt like “best CRM” might fan out into “affordable CRM for SMBs,” “CRM comparison for startups,” “small business CRM with automation,” and dozens more.

For each variation, the model searches for and retrieves relevant sources. It then aggregates content from multiple sources, evaluates each for relevance, authority, and recency, and finally synthesizes a response with citations.

This fanout process is why you can’t just optimize for one keyword and hope to show up. The AI is evaluating your brand against a much wider set of queries than any single user would type.

Multi-Source Aggregation

The Athena analysis of 8 million AI responses shows just how many sources each model pulls from when building an answer:

- Grok draws from roughly 16 sources per response

- ChatGPT uses about 15

- Google AI Mode and Google AI Overviews each pull from around 11

- Gemini uses approximately 10

Where those sources come from tells you everything about where your GEO strategy needs to focus.

- Reddit accounts for 22.9% of the top-cited domains across AI models

- YouTube follows at 13.4%

- Wikipedia sits at 6.4%

- Forbes at 4.7%

- LinkedIn at 4.0%

These are the primary surfaces where AI learns about categories, and they’re not your website.

The implications are straightforward: You can’t predict every query variation an AI will generate, so you need comprehensive content that covers the full breadth of your category.

Typically, LLMs pull from 5 to 16 sources per answer, so your presence should be multi-platform. And different models favor different ecosystems. Perplexity leans heavily on community platforms (over 90%), while Gemini relies on them far less (roughly 7%), so you need to be visible across all major AI platforms, not just one.

Now you understand the machinery. AI doesn’t rank pages like search engines do. It assembles answers from wherever it finds the most trustworthy, relevant, and comprehensive information. That means your GEO strategy can’t be a single initiative or a one-time optimization. It needs to work across four dimensions simultaneously.

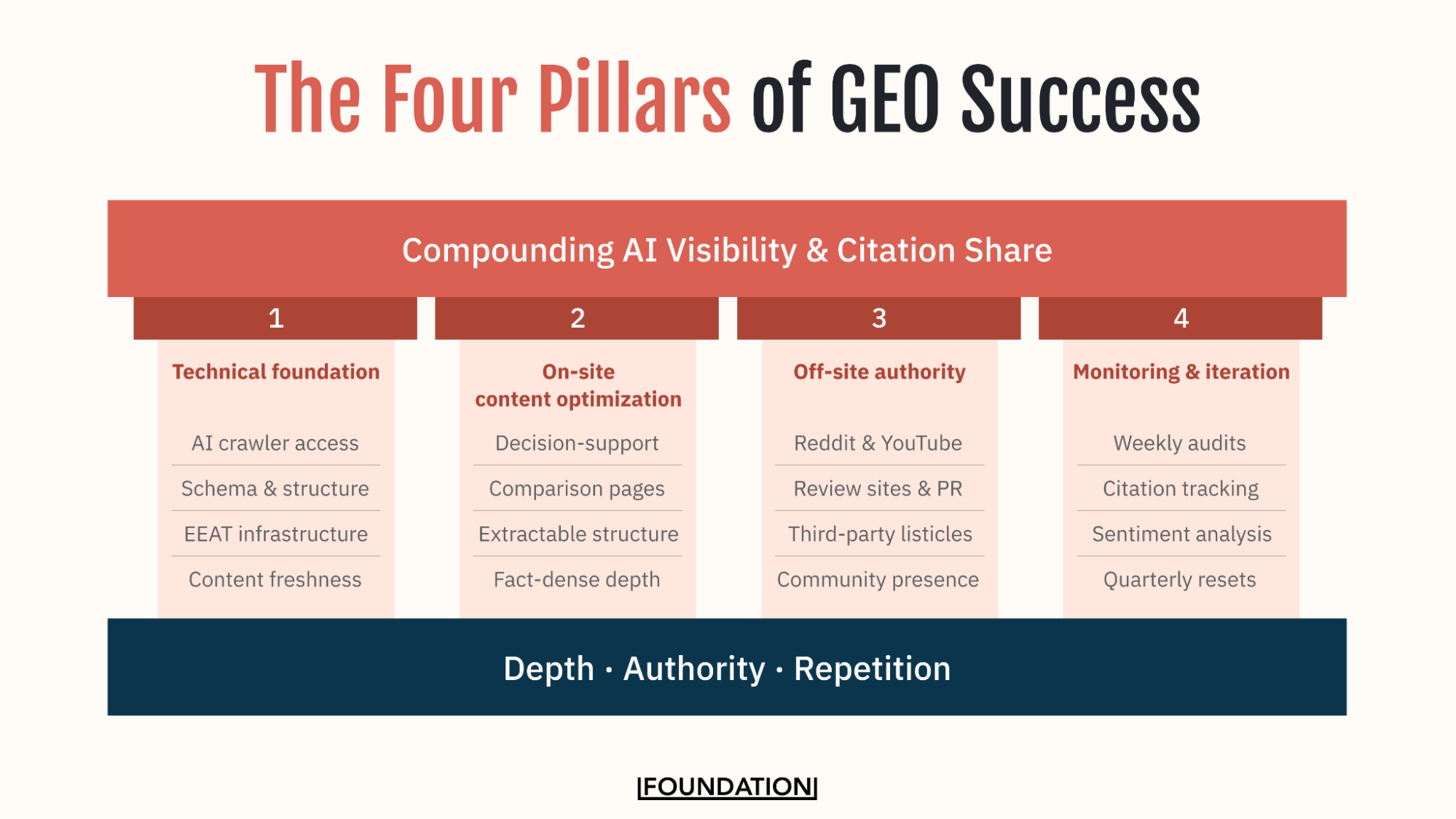

The Four Pillars of GEO Success

At Foundation, our GEO philosophy is built on a simple principle: LLMs reward depth, authority, and repetition across trusted surfaces, not isolated optimizations in a single domain.

You can’t win GEO by optimizing your website alone. Success requires orchestrating four pillars simultaneously:

- A technical foundation that makes your content discoverable and interpretable by AI

- On-site content optimization that makes it worth citing

- Off-site authority building across the platforms AI trusts most

- Ongoing monitoring and iteration to stay visible as the models evolve

1) Technical Foundation

Goal: Make your content easily discoverable and interpretable by AI systems.

What This Actually Means

Technical GEO isn’t glamorous, but it’s the foundation on which everything else sits. Think of it as the infrastructure that determines whether AI can even find and understand your content before it decides whether to cite it. The GEO-specific priorities layer on top of technical SEO, and if you skip this step, the rest of your strategy is built on sand.

Why It Matters

The AirOps data here is decisive. Pages with sequential heading hierarchies have 2.8 times higher citation rates than those with a fragmented structure. 87% of cited pages use a single H1. 68.7% of ChatGPT citations follow logical heading hierarchies. And 61% of cited pages use three or more schema types.

Freshness compounds this further. Pages not updated quarterly are more than three times more likely to lose citations. Over 70% of all pages cited by AI have been updated within the past 12 months, and more than 50% were refreshed within six months. In fast-moving industries like SaaS and fintech, content older than three months sees steep drops in citation likelihood.

How It Works

AI crawler permissions determine whether your content is accessible at all. Many sites inadvertently block AI indexing via their robots.txt files. To get around this, you need to explicitly allow crawlers like Google-Extended, GPTBot, and Claude-Web. From there, LLMs.txt acts as a roadmap for LLMs navigating your site, communicating which pages matter most and what content is most relevant to your brand.

We think about EEAT (Experience, Expertise, Authoritativeness, Trustworthiness) as infrastructure, not a checklist. LLMs heavily weigh who is speaking. Thin or anonymous authorship suppresses citation potential. We address this early in every engagement because it amplifies everything else.

What AI Is Looking For

Comprehensive structured data is how AI understands what your business does. Organization, Product, and Service schema are table stakes. FAQ schema is particularly valuable. AirOps data shows it appears in 10.5% of cited pages, helping models map answers to queries directly. Article schema with author attribution, HowTo schema, and Review schema all strengthen the signals AI uses to evaluate your content.

What to Do

Audit your robots.txt file for AI crawler blocks. Implement LLMs.txt. Add structured data across your key pages, prioritizing FAQ and Article schema. Assign real author credentials to content. Set a quarterly content refresh cadence and treat it as non-negotiable.

| Key Takeaway: If AI can’t find your content, it can’t cite it. Technical GEO removes the barriers that sit between your content and every model that might surface it. |

2) On-Site Content Optimization

Goal: Create content that’s extractable, citable, and authoritative.

What This Actually Means

If Pillar 1 makes your content findable, Pillar 2 makes it worth finding. The question here is: when an AI evaluates your content alongside a dozen competitors’, does yours give it what it needs to build a confident answer? Most brand content doesn’t. Not because it’s poorly written, but because it’s structured for humans browsing, not AI synthesizing.

Why It Matters

Athena’s analysis of 8 million AI responses reveals what types of content AI models actually prefer. For B2B software specifically, comparative and selection content accounts for 27.7% of what gets cited, followed by informational content at 24.3% and acquisition/decision content at 21.3%. AI prioritizes decision-support content over promotional material.

Where AI enters your site also matters. Blog content accounts for 44.5% of AI entry points, followed by the homepage at 19% and product pages at 13.3%. Educational hubs are the primary knowledge source for AI, not your product pages.

How It Works

AI models prefer content that’s fact-dense, clearly structured with headers and bullet points, and easy to quote. We recommend a three-layer structure: a direct answer in the first 50 words, a “why it matters” section of 100–150 words, and then deep analysis of 1,000+ words. This gives the AI an extractable snippet at the top while providing the depth and authority signals it needs to trust your content.

What AI Wants to Cite

The bottom-of-funnel content types that drive the most GEO value are the ones Foundation has been recommending for years. They’re just more important now. Alternative pages, comparison pages, best-of lists, migration guides, and top-ten roundups. Research from Profound shows that 32.5% of all LLM citations come from comparative listicles.

What not to create: glossaries. There’s no point in being the cited source for a definition that an AI Overview surfaces directly because there’s virtually no click-through on glossary content from AI answers. Definitions belong in FAQ schema, not standalone pages.

What to Do

Audit your existing content for extractability. Restructure key pages with the three-layer format. Prioritize building or updating comparative and decision-support assets. Replace any standalone glossary investment with FAQ schema and bottom-of-funnel content.

| Key Takeaway: AI cites content it can use to build a confident answer. Structure your content around the decisions your buyers are making, not the features you want to promote. |

3) Off-Site Authority & Citations

Goal: Build presence across the platforms AI trusts most.

What This Actually Means

This is where GEO diverges most sharply from traditional SEO. And it’s where most brands (and most agencies) fall short. Here’s the hard truth: if you just hire an agency to optimize your site for GEO, you are missing up to 90% of the opportunity. The vast majority of your AI visibility won’t come from your own domain. It will come from everywhere else.

Why It Matters

AirOps data reveals perhaps the starkest data point in GEO: 85% of brand mentions in AI answers come from third-party sources. Only 15% come from a brand’s own website. Brands are 6.5 times more likely to be cited through third-party content than their own domain. And roughly 90% of those third-party mentions come from listicles, comparison pages, and review roundups.

Now, let’s get realistic for a second. Based on our internal data at Foundation, achieving even 5% citation share from your own domain is a huge win. In highly competitive B2B verticals, that’s the ceiling, not the floor. That means 95% of citations come from domains you don’t control.

This reframes what “winning” at GEO actually means. It’s not about dominating the citation pool. It’s about limiting the number of competitors cited more often than you and stacking influence across platforms. If you’re consistently among the top three cited brands in your category, you’re winning.

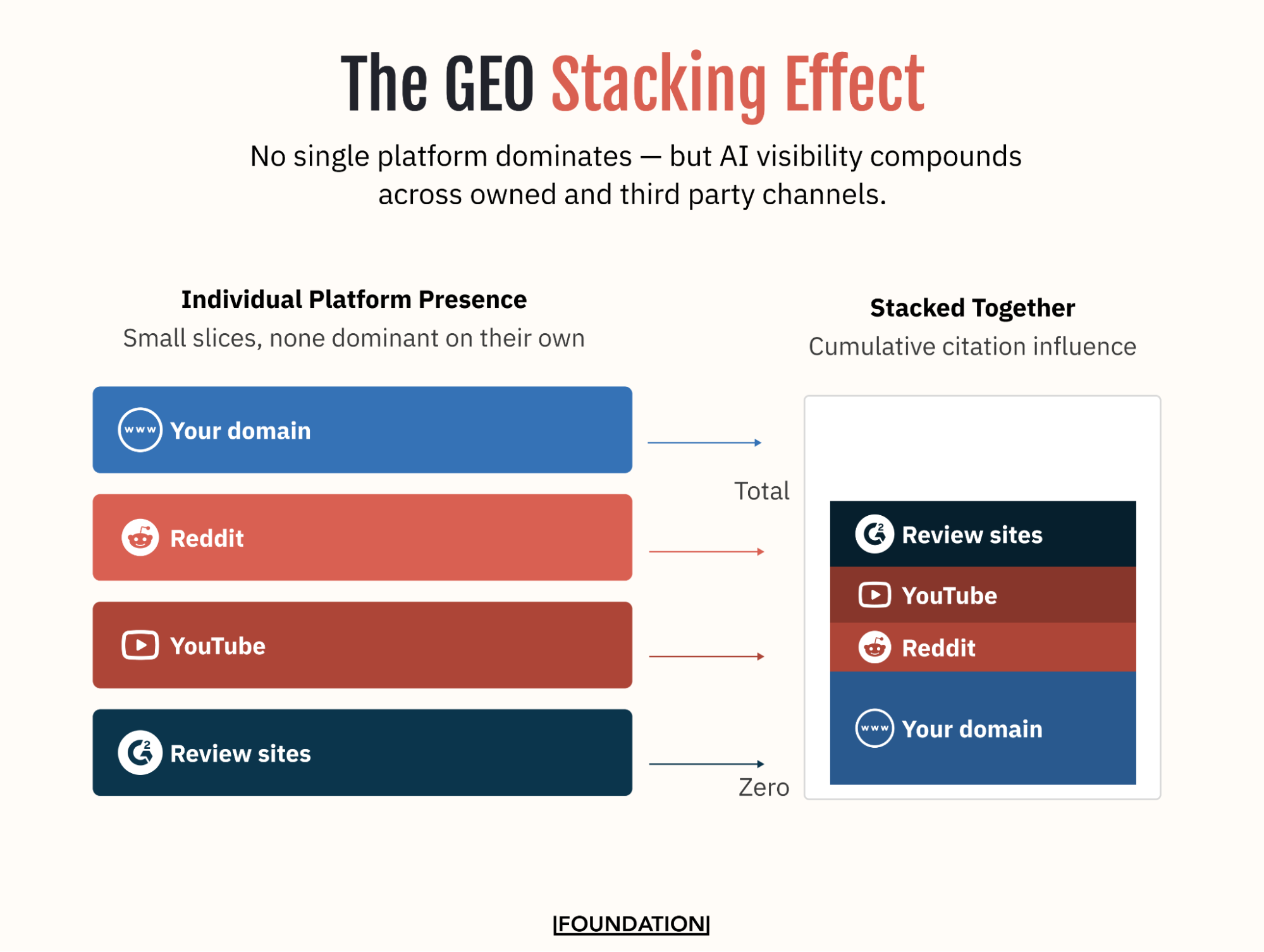

How It Works

Your total AI impact is cumulative across all sources, a phenomenon that our strategy team calls the GEO stacking effect. Your domain might account for 4% of citations, with additional influence coming from Reddit, YouTube, and review platforms. Individually, none of these dominates. Together, they compound, and your effective citation influence can put you ahead of 95% of competitors without dominating any single platform.

It’s also important to note that different models lean on different ecosystems. Perplexity relies on community platforms over 90% of the time, while Gemini uses them in only about 7% of answers. You need presence across the board, because optimizing for one model at the expense of others leaves significant visibility on the table.

Where AI Models Learn

About 48% of citations come from community and user-generated platforms. Reddit appears in roughly 1 in 5 AI answers and accounts for 88% of category-level exploration queries. YouTube is the second most-cited source in Gemini and Perplexity, with 75% of its citations driven by educational, non-branded queries. LinkedIn provides professional validation. Wikipedia supplies foundational definitions. Review sites like G2, Capterra, and TrustRadius round out the trust layer.

One more thing worth knowing: nofollow links equal dofollow for AI. AirOps correlation data shows that nofollow links (0.509 Spearman correlation with AI visibility) perform essentially identically to dofollow links (0.504). AI treats links as recognition signals, not authority transfer mechanisms, which means the entire traditional link-building playbook needs updating.

What to Do

Target already-cited sources first. One of the highest-leverage moves in off-site GEO isn’t creating new content from scratch. It’s improving your position where you already appear (moving from #5 to #3 on a listicle) and securing inclusion where you’re missing entirely. Coordinate with PR teams to get featured in the publications AI already trusts.

Stop optimizing exclusively for dofollow backlinks. Start optimizing for breadth of recognition across forums, reviews, and community platforms where the link type doesn’t matter, but the mention does.

| Key Takeaway: GEO isn’t about owning your domain. It’s about showing up across the ecosystem AI uses to form answers, and that ecosystem is mostly places you don’t control. |

4) Monitoring & Iteration

What This Actually Means

GEO isn’t a one-time optimization. The models rebalance constantly, new sources get cited, and competitors adapt. The brands that maintain visibility are the ones treating GEO as an ongoing operational discipline, not a project with a defined end date. The operational rule that guides everything we do at Foundation is simple: if it’s not changing mentions, citations, or sentiment, we revisit it.

Why It Matters

Visibility in AI search is inherently volatile. AirOps research found that only 30% of brands stay visible between consecutive answers. Just 20% remain present across five consecutive runs. Without a monitoring cadence, you have no way of knowing whether your strategy is working, or whether you’ve quietly fallen off the map.

How It Works

“Changing” in the context of GEO means your website is being mentioned or cited more frequently, you’re appearing in sources AI pulls from (Reddit, reviews, listicles), and your presence is improving: higher position, better sentiment, more consistent visibility across platforms.

Top GEO tools like Profound, AirOps, Athena, and Semrush’s AI Toolkit make this trackable today. We recommend weekly visibility audits on your top 20 prompts, monthly trend analysis and citation tracking, and quarterly deep-dives on sentiment, competitive benchmarks, and strategy refinement.

What to Track

Every initiative must answer one question: does this increase the likelihood of AI visibility? That means tracking mentions and citations across platforms, not just your own domain. Monitor which sources AI is pulling from in your category, where competitors are appearing that you aren’t, and how sentiment around your brand is being framed in AI responses.

What to Do

Build a prompt library of the 20 queries most relevant to your category and run them weekly. Set up monthly citation tracking across the platforms AI trusts most in your vertical. Use quarterly strategy reviews to reallocate effort. If Reddit is the most-cited source for your category, invest heavily there; if YouTube isn’t driving visibility, scale back and redirect.

| Key Takeaway: GEO compounds when it’s actively managed. The brands with the most consistent AI visibility aren’t the ones who optimized once — they’re the ones who kept showing up. |

Measuring GEO Performance

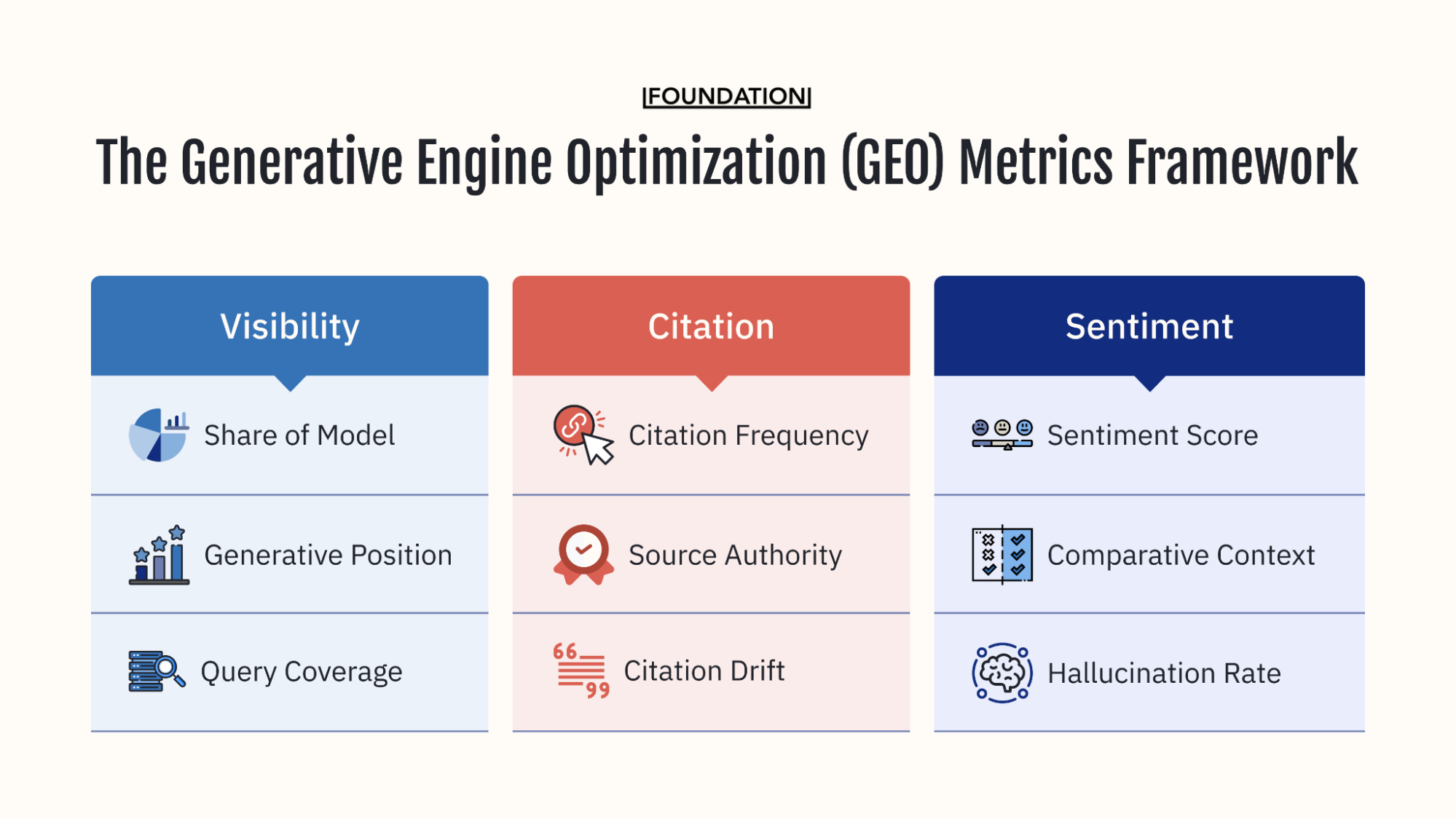

The Foundation Marketing framework for measuring GEO metrics is built around three pillars: visibility, citation, and sentiment. Together, they answer whether AI sees you, trusts you, and likes you.

The Three Pillars of Measurement

Visibility Metrics (Do they see me?)

- Share of Model measures the percentage of times your brand appears for category prompts across AI platforms.

- Generative Position tracks where you land in AI-generated lists — being first-mentioned versus fifth-mentioned carries very different weight in how users interpret the response.

- Query Coverage tells you whether you’re showing up across the full range of buyer intents, including the expanded “fanout” queries AI generates from a single prompt.

Citation Metrics (Do they trust me?)

- Citation Frequency measures how often AI links to your domain.

- Source Authority evaluates the quality of third-party sites, citing you. A G2 review, a Forbes listicle, and a Reddit thread all carry different weights.

- Citation Drift tracks how often your brand gets swapped out for a competitor as the model rotates through sources.

Sentiment Metrics (Do they like me?)

- Sentiment Score tracks whether AI describes your brand positively, neutrally, or negatively.

- Hallucination Rate monitors factually incorrect information like, wrong pricing, discontinued products listed as current, confusion with competitors.

- Competitive Positioning reveals how you’re framed relative to competitors in shared responses.

Understanding Visibility Volatility

Unlike SEO rankings, which shift gradually over weeks, AI visibility fluctuates with every query. The AirOps benchmarks are important to internalize: only 30% of brands stay visible between consecutive answers, and just 20% remain present across five runs.

But here’s the important nuance: most visibility loss isn’t permanent. More than 50% of brands that drop from an answer resurface within one to three runs. Brands with dual-signal visibility (both mention and citation) show 40% higher recurrence likelihood and return faster after drops.

What you should do: Measure presence over 20–50 runs, not single snapshots. Monitor return speed after drops. Aim for dual-signal visibility. And expect 20–30% volatility as the baseline.

What Success Looks Like

Based on Athena data, here’s a typical trajectory for brands implementing GEO:

- Baseline: 0–5% Share of Model.

- Three months: 8–15%.

- Six months: 15–25%.

- Twelve months: 25–40%.

Top performers reaching 40–60% Share of Model share common characteristics: deep off-site presence across Reddit, YouTube, and review sites; quarterly content refresh cycles; robust schema implementation; and active community engagement.

The competitive gap is already significant. Average competitors sit at roughly 17% Share of Model, while category leaders reach approximately 57%, a 3x advantage that widens every quarter.

Measurement tells you whether GEO is working. But the question most executives ask first isn’t about metrics…it’s about money. The honest answer is more nuanced than most agencies will admit, and understanding it is critical to setting the right expectations with your leadership team.

Understanding the ROI of GEO

Every marketing leader eventually asks the same question: What’s the return? For GEO, the answer requires rethinking how you define “return” in the first place.

GEO doesn’t fit neatly into a last-click attribution model. It doesn’t produce a clean line from investment to revenue the way a paid search campaign does. But neither do brand marketing, PR, or thought leadership, and nobody seriously argues those investments aren’t worth making.

The challenge with GEO is that its impact is real, measurable in aggregate, and strategically critical, but it requires a different measurement mindset than most marketing teams are used to.

The Attribution Problem

Let’s be honest about this: isolating the ROI of GEO using traditional attribution models is currently impossible.

GEO operates in zero-click environments. When a prospect gets a recommendation from ChatGPT, validates it in a Reddit thread, and visits your website three days later, there’s no way to connect those dots with standard analytics. Multi-touch attribution breaks down when the “touch” happens outside your ecosystem.

The question isn’t “What’s the ROI of GEO?” because that framing leads nowhere productive.

It’s: “What’s the opportunity cost of ceding territory to competitors across every AI platform buyers use?”

GEO influences buyer behaviour and strengthens market position, but it doesn’t offer clean attribution to revenue. Think of it as infrastructure investment: similar to investing in a CRM before proving direct revenue impact, or developing brand guidelines without calculating exact ROI.

The Traffic Quality Paradox

The Ahrefs data bears repeating because it’s so striking. ChatGPT drives 0.5% of their traffic but 12.1% of signups, roughly 24 times the average conversion rate. Additional data from the Athena report suggests that AI-attributed leads close 20–30% faster than traditional organic leads.

Traffic volume goes down. Lead quality goes up.

By the time someone clicks through from an AI tool, they’ve already completed the buyer’s journey in a conversational session. They’ve asked follow-up questions, compared alternatives, and self-qualified on features, pricing, and fit.

From a traditional metrics point of view, it looks scary: lower organic traffic, fewer top-of-funnel visits, reduced pageviews per session.

But quality metrics improve: higher conversion rates, shorter sales cycles, better product-market fit, more informed sales conversations.

In other words, don’t judge GEO by traffic volume. Track lead quality scores from sales, sales cycle length for AI-attributed leads, win rate for AI-influenced opportunities, and customer lifetime value segmented by discovery source.

GEO Examples and Case Studies

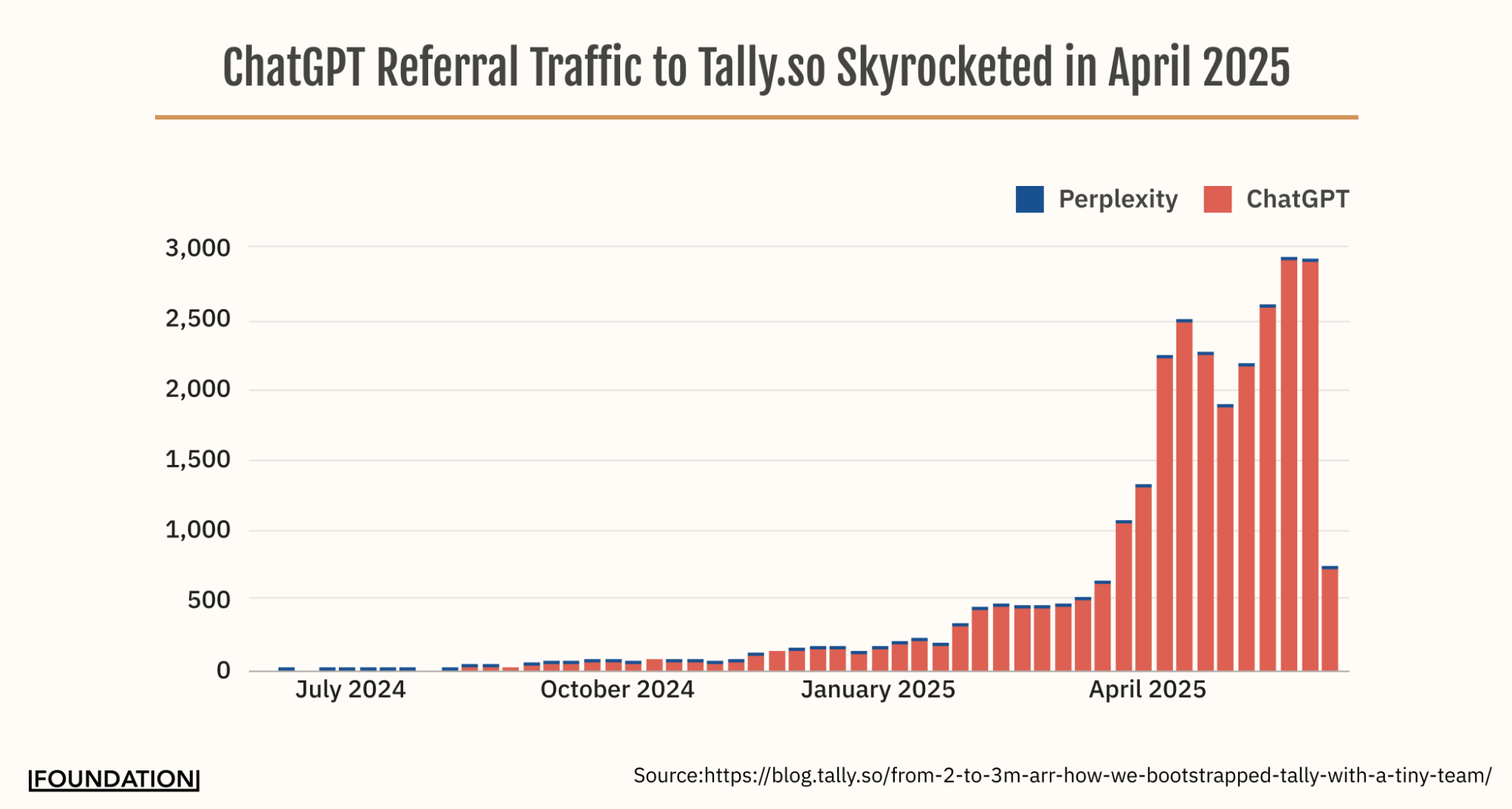

Tally: From Bootstrapped Underdog to AI-First Growth Engine

The challenge: Tally is a bootstrapped form builder competing against Typeform and Google Forms, two brands with massive existing awareness.

The strategy: High-intent comparison pages targeting “Tally vs Typeform” and similar queries. Heavy engagement on Reddit, including the r/TallyForms community. An LLM analytics stack for tracking visibility. And a key move: attribution capture via their onboarding survey to connect AI discovery to signups.

The results: ChatGPT became Tally’s leading referral source, accounting for 9.6% of web referrals. 25% of new signups came from AI discovery. The company logged over 125,000 visits from ChatGPT in a single month and reached their $3M ARR milestone five months ahead of schedule.

Key insight: User-generated content outperformed mass-produced marketing material. Community platforms were crucial for generating authentic responses that LLMs trusted and cited.

Read the full Tally AI-Growth Case Study here.

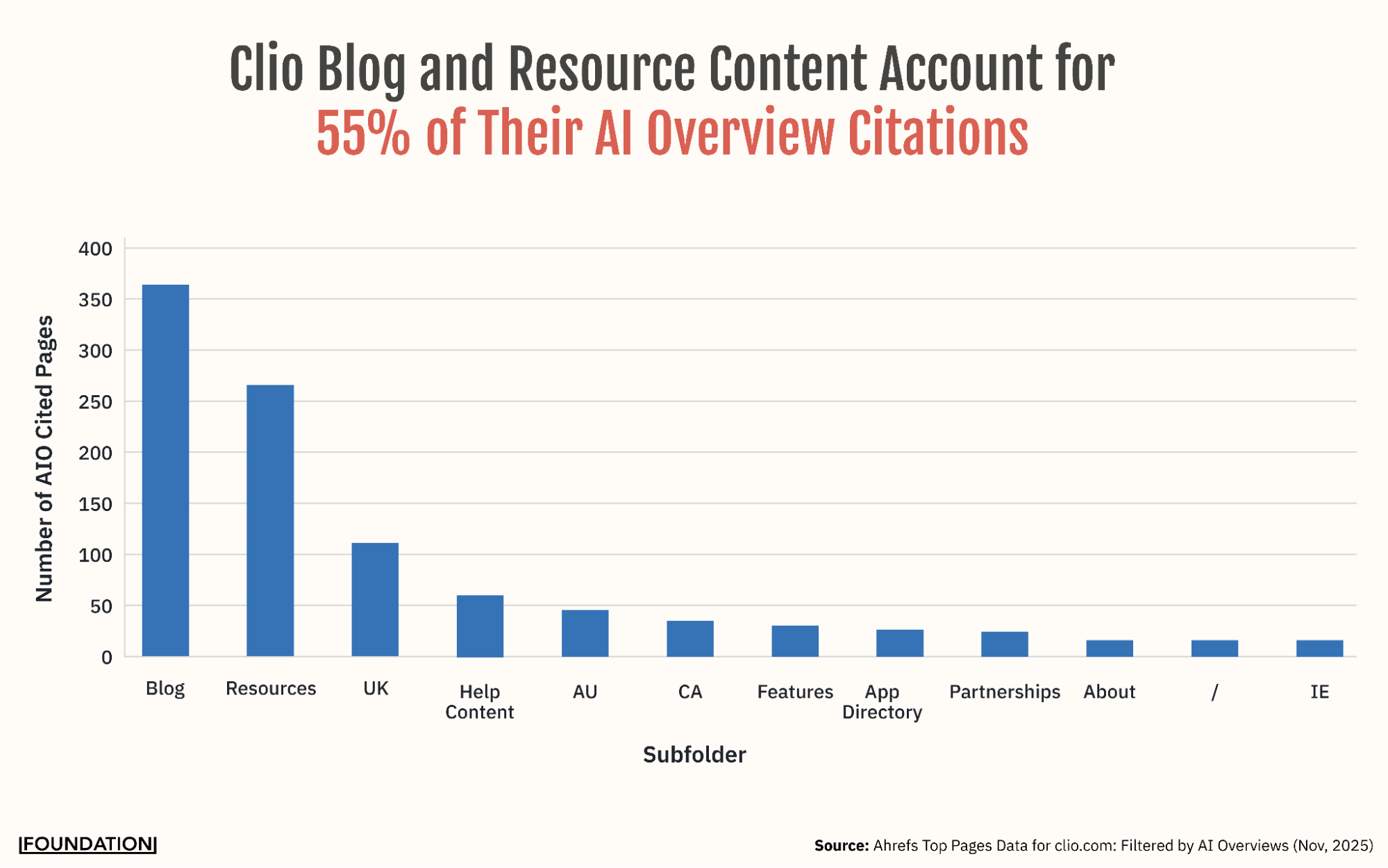

Clio: A Decade of Content Paying Off in the AI Era

The challenge: Clio operates in the competitive legal tech space, where AI Overviews threatened to erode the organic traffic they’d built over years.

The strategy: Rather than pivoting to a GEO-specific playbook, Clio benefited from a decade of authoritative content investment: 555 blog URLs, 383 resource pages, localized content across the UK, Canada, Australia, and Ireland, and a domain rating of 85 backed by 13,100+ referring domains.

The results: Full SERP dominance. First mention in AI Overviews, plus top organic and paid positions. ChatGPT also became a leading referral source, at 39.9% of referral traffic at the time of our analysis. Their citation share hit 7.3%, which is more than the next four competitors combined. Sentiment ran 74.8% positive, with Share of Model ranging from 32.9% on ChatGPT to 47.8% on Gemini.

Key insight: Clio didn’t optimize for AI specifically. They built content so well-structured and authoritative that AI naturally gravitated to it. The lesson: the best GEO strategy is often a decade of great SEO, but not everyone has that luxury, which is why the four pillars matter.

Learn how Clio’s SEO dominance translates to AI visibility now.

The evidence is there. The question now is how to do it. Whether you’re starting from zero or already tracking AI visibility, the next section walks through the exact steps to build your GEO foundation, prioritized by impact and sequenced so you see results within 90 days.

How to Get Started With GEO

You’ve seen the data. You understand the mechanics. You know what the competitive landscape looks like. Now it’s time to do something about it.

The mistake most teams make is trying to boil the ocean: launching a dozen initiatives across all four pillars at once and measuring none of them. The brands that move fastest start with a clear baseline, build a focused 90-day plan, and let the data tell them where to invest next.

Here’s the sequence that works.

Step 1: Run Your Baseline Assessment

Start by defining your “Golden Prompts”, the top 15–20 questions your customers actually ask AI tools. These should be conversational, specific, and often include multiple criteria. Focus on bottom-of-funnel modifiers:

- “Best [category tool] for [use case]?”

- “Which is the better [tool type] for [target market], [Your brand] or [Competitor]?”

- “What’s the best [tool type] alternative to [Competitor]?”

Run these prompts in incognito mode across ChatGPT, Gemini, Perplexity, Claude, and Google AI Overviews. Use fresh instances to avoid personalization bias.

For each prompt, build a scorecard: Did you appear? What position? What was the sentiment? What sources were cited? Was the information accurate?

Then prioritize using a simple framework:

- High business value + low AI visibility = immediate priority. These are your biggest opportunities.

- High business value + high AI visibility = maintain and update. Protect what’s working.

- Low business value + high AI visibility = analyze why. You might be winning queries that don’t matter.

- Low business value + low AI visibility = deprioritize. Not every battle is worth fighting.

Once you have your list of immediate priorities and positions to maintain and update, you can start to build out your plan of attack (and defence).

Step 2: Build Your 90-Day Foundation

Technical (Month 1): Fix technical SEO errors: 404s, canonicals, robots.txt issues. Implement LLMs.txt. Add comprehensive schema (Organization, Product, FAQ, Article). Refine AI crawler permissions. Build robust author pages with real credentials and trust signals.

Content (Months 1–3): Create four to eight money pages: comparisons, alternatives, best-of lists. Optimize four to eight existing pages with structured data, statistics, expert quotes, and citations. Implement FAQ schema on your highest-traffic pages.

Off-Site (Months 1–3): Identify the top 50 cited URLs in your space using tools like Profound. Execute your first wave of editorial outreach to the publications AI already trusts. Engage in three to five high-value Reddit threads where your category is being discussed. Consider two to three YouTube videos optimized for AI-relevant queries.

Measurement: Set up weekly visibility audits, configure citation tracking, and build your monthly reporting dashboard.

Step 3: What Drives Faster Gains

Brands that move from 0% to 15–25% Share of Model in under six months typically share a few habits. They start with off-site presence first: Reddit, YouTube, and review sites before on-domain content. They optimize existing high-traffic pages before creating new ones. They focus heavily on comparative content (alternatives, comparisons, “best of” lists). And they implement aggressive refresh cadences: monthly for money pages, quarterly for blog content.

The common thread is prioritizing where AI already looks before trying to change where it looks.

Working With a GEO Agency

If you’re currently filling out your GEO RFP for an agency or partner, here are a few things to keep in mind.

GEO success requires a breadth of capabilities that few agencies have built. At a minimum, you need technical SEO expertise (schema, EEAT, infrastructure), content production at scale (four to eight assets per month), off-site authority building (PR, community engagement, review site optimization), multi-platform strategy (not just your domain), and GEO-specific measurement capabilities using tools like Profound, AirOps, or Athena.

Red Flags

- They only want to optimize your website — that’s missing 90% of the opportunity.

- They offer a static scope — GEO requires dynamic, month-to-month strategy adjustments based on what the data shows.

- They have no community or Reddit expertise — they can’t address the single most-cited domain across LLMs.

- They can’t show citation tracking data — if they can’t measure it, they can’t prove results.

- They promise quick wins — consistent visibility takes 12–16 weeks to build at minimum.

Questions to Ask

- How do you approach off-site citations?

- What’s your Reddit and YouTube strategy?

- How do you measure visibility volatility?

- Can you show citation share data from existing clients?

- What’s your recommended content refresh cadence?

The right GEO partner will orchestrate all four pillars with dynamic monthly scopes tailored to what your brand needs, when it needs it, not a rigid, one-size-fits-all content calendar.

The Window Is Open. Are You Ready to Act?

95% of buyers purchase from their Day One shortlist. That shortlist is increasingly shaped by AI conversations your team can’t see or track. The brands that establish AI visibility now are building compounding advantages that will only widen with time.

The playbook is clear.

Run your baseline assessment. Identify your citation gaps. Build your 90-day foundation. And commit to the dynamic, multi-platform strategy that GEO demands.

The search landscape has changed. The question isn’t whether to invest in GEO, it’s whether you can afford not to.

Get in touch with the leading generative engine optimization agency today to bring your marketing strategy into the AI era.