Article's Content

Welcome to another edition of What Matters This Week,

Last week we broke down our 8,566-keyword study on Reddit’s dominance in B2B search. This week, Reddit itself just changed the rules of engagement.

Reddit Is Cracking Down on Bots. If Your Brand Uses Automation, Pay Attention.

Here’s the TL;DR

- Reddit is rolling out mandatory [App] labels for automated accounts, human verification for suspicious behavior, and easier bot reporting tools on March 31st.

- The brands running authentic employee accounts just got a structural advantage, while anyone relying on gray-area automation is about to be exposed.

- Audit every account posting on your behalf, register legitimate automation before the June deadline, and double down on named employee accounts in the subreddits that matter to your category.

What’s happening

Reddit CEO Steve Huffman (u/spez) published a major platform update this week: “Humans welcome (bots must wear name tags).” The announcement lays out four changes coming to Reddit, all aimed at the same goal: making sure users know when they’re talking to a person and when they’re not.

Here’s what’s changing:

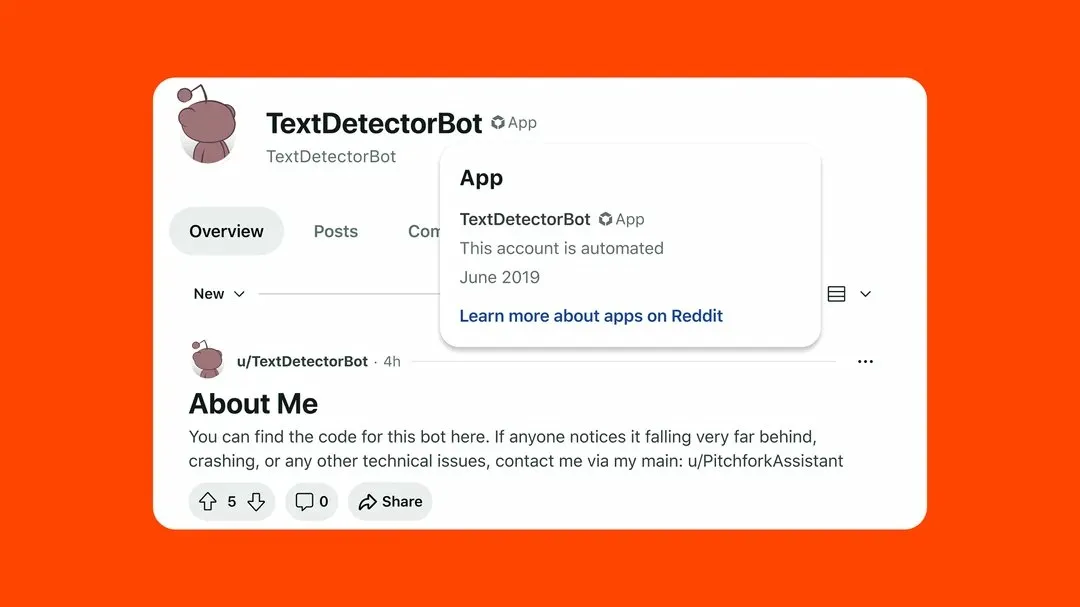

- A new [App] label for automated accounts. Starting March 31st, accounts that use automation in allowed ways — what Reddit has historically called “good bots” — will carry a visible [App] tag on their profile and posts. If you see that label, you know you’re interacting with a machine, not a person. Reddit’s developer team outlined two tiers:

- “Developer Platform App” for accounts built on Reddit’s official dev tools

- A general “App” label for other non-violating automated accounts

- Continued removal of spam bots at scale. Reddit says it already removes an average of 100,000 accounts per day for spam and nefarious bot activity. That pace continues.

- Human verification for suspicious accounts. If an account’s behavior suggests it isn’t human — including automation and web agents — Reddit may ask it to confirm there’s a person behind it. Huffman was explicit: this is not sitewide human verification. It’s targeted, rare, and aimed at accounts exhibiting automated behavior.

- Easier reporting tools for users. Reddit is expanding how users can flag suspected automation, including using community comments like “nice post, bot, now f*** off” as a signal. As Huffman put it: “Redditors have long been the best bullshit detectors, and increasingly great Turing testers.”

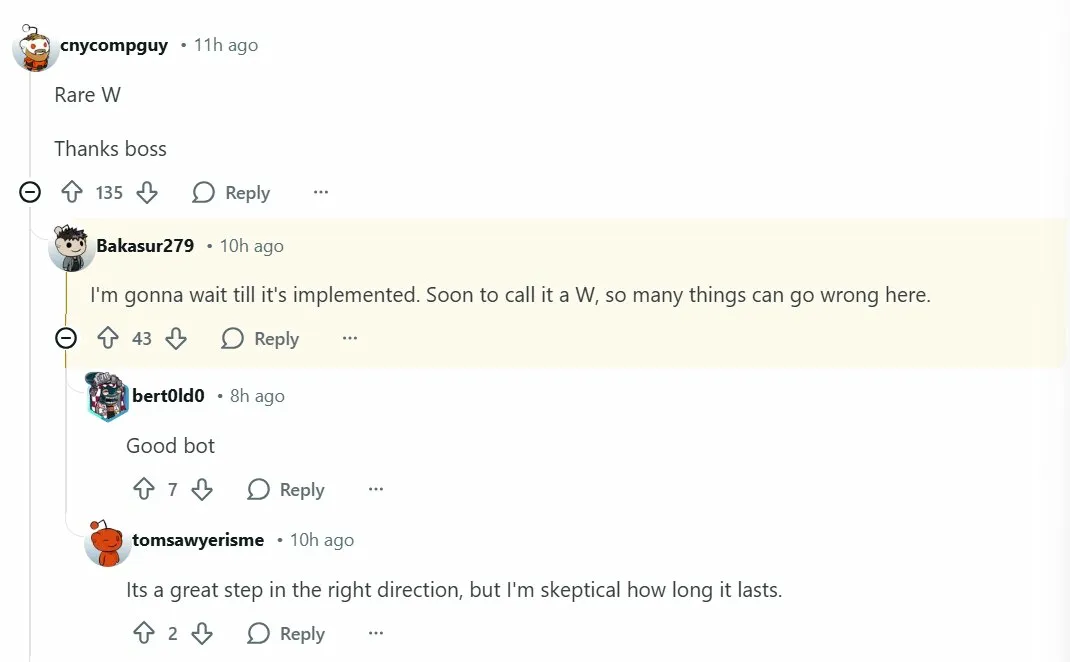

The community response was cautiously positive. The top comment on Huffman’s post: “Rare W. Thanks boss” — with 135 upvotes. Others were more measured: “I’m gonna wait till it’s implemented. Soon to call it a W, so many things can go wrong here.”

On privacy, Huffman addressed concerns head-on. Reddit’s approach uses passkeys and third-party verification tools that confirm humanness without exposing identity. When one user threatened to leave if ID verification was ever required, Huffman responded: “We know, but hear me out, no ID, but you need to send us a copy of your diary.”

Why it matters

- The astroturfing window is closing, fast.

We recently covered brands getting publicly called out across subreddits for suspected bot activity and astroturfing. This announcement is the platform-level infrastructure that makes enforcement systematic.

Reddit has always relied on two responses to police bad actors:

- Human response: moderators and community vigilance to police bad actors

- Platform response: automated detection and labelling tools built into Reddit’s back end

While this is an update to the platform response, it also makes it easier for the humans of Reddit to push back against spam. The margin for brands operating in gray areas just collapsed.

If your Reddit strategy relies on accounts that could be flagged as automated — sockpuppets, mass-posting tools, engagement bots, or agency accounts running at scale without human oversight — this is the moment to stop. Not because it might get caught eventually. Because the detection infrastructure is now being built to catch it by default.

- Legitimate brand presence just became more valuable.

Here’s the upside: as Reddit gets better at identifying and labeling automation, the accounts that are clearly human become more credible by contrast.

For B2B brands that have invested in genuine Reddit presence — real employees answering real questions in relevant subreddits, branded accounts engaging transparently, subject-matter experts contributing to threads — this announcement raises the floor under your investment. When every bot carries a tag and suspicious accounts get flagged, authentic participation stands out more, not less.

This is the same dynamic we’ve been tracking across AI search: as platforms get pickier about what they trust (GPT-5.3 citing fewer sources, Google elevating UGC over vendor content), the brands with real authority compound their advantage.

- Reddit is protecting the asset that makes it valuable to LLMs.

The announcement makes sense beyond just platform health. Reddit’s content is licensed to Google and OpenAI for AI training. Its threads are cited millions of times by ChatGPT, Perplexity, and Google AI Overviews. The value of that content (and therefore the value of Reddit’s licensing deals) hinges on it being authentically human.

Huffman said it directly: “Reddit’s purpose is for people to talk to people. And we want it to stay that way.”

If Reddit gets overrun by bots posting AI-generated content, the platform’s value as a training source degrades. This crackdown protects both the user experience and the authenticity signal that makes Reddit one of the most cited domains in AI search.

For marketers, that means Reddit’s influence on both SERPs and LLM responses isn’t going away. If anything, a cleaner platform with more verified human content makes Reddit threads even more trustworthy in the eyes of AI models.

What to do about it

- Audit every account posting on your behalf — right now. If you use an agency or freelancer for Reddit, ask them which accounts they’re operating, whether any use automation, and whether those accounts would survive a verification prompt. If their answer is unclear, that’s your answer.

- Register any legitimate automation before the deadline. If your brand runs allowed bots (auto-moderation in your branded subreddit, notification bots, customer support automation), register them through Reddit’s developer portal before the end of June. Registered apps may be eligible for a porting bounty, and more importantly, they’ll carry the [App] label instead of getting flagged as suspicious.

- Double down on named employee accounts. The “u/FirstName from BrandName” model we recommend is more important than ever. As account verification rolls out, clearly human profiles with posting history, karma, and genuine community engagement become the gold standard. If you don’t have at least one employee actively participating in the 3–5 subreddits that matter to your category, start now.

- Treat this as a signal about where all platforms are heading. Reddit is the first major platform to systematically label automation and build human verification into its infrastructure. LinkedIn, YouTube, and others will follow as AI-generated content scales. The brands building real human presence across platforms today are the ones that won’t scramble when every platform starts asking the same question: is there a person behind this account?

🎙️ Upcoming Event: The State of AI Search — Ross Simmonds x Reddit for Business

Our CEO Ross is partnering with Reddit for Business to break down how LLMs decide which brands to surface — and what marketers can do about it right now. He’s bringing new research, new data, and new tactics on the role of UGC and community content in AI visibility. If this week’s newsletter hit a nerve, this session goes deeper.

👉 Register for the free webinar

Go Deeper on: Doing Reddit the Right Way

→ Understanding the Reddit Mod: A Marketer’s Guide — Our full breakdown of what moderators can see, how they make decisions, and how to build relationships with them. Covers account setup, the karma economy, disclosure best practices, and why treating each subreddit like its own market is the only approach that works. Essential reading in light of this week’s crackdown. [Foundation Labs]

→ 1Password’s Reddit-Based Approach to User Retention — The best example of what “doing Reddit right” looks like in practice. A dozen employees active in their branded subreddit, a flair system that triages feedback to product teams, and a community that educates itself. If you’re wondering what a compliant, high-value Reddit presence looks like after this week’s announcement, this is the model. [Foundation Labs]

→ We Asked 7 B2B Reddit Strategists What “Good” Content Looks Like — Seven Foundation strategists share what earns engagement vs. what gets you banned. Includes the three-sentence test (user fit, brand fit, algorithm fit), the lurk-learn-leap framework, and why AI citation doesn’t change the fundamentals — it just raises the stakes. [Foundation Labs]

That’s it for this week.

If something landed, tell us. If something felt off, tell us that too. Reply to this email or DM me on LinkedIn.

Have a great weekend,

Ethan Crump