Article's Content

You’ve done everything the playbook says to do. Keywords mapped. Content calendar built. Posts and pages published. Your content strategy checks every box that’s supposed to matter.

But when your buyer asks an AI a question your product was literally built to answer, someone else gets the citation.

That’s not a content quality problem. It’s not a domain authority problem. It’s a query fan-out problem, and most B2B marketing teams have no idea it exists.

Nearly a third of AI citation opportunities are invisible to any keyword tool your team is currently using. That’s not a minor gap in your strategy. That’s a missing dimension of it.

But to understand why, you need to see what happens under the hood from when your buyer asks a question to when the AI gives its answer.

What Is a Query Fan-Out?

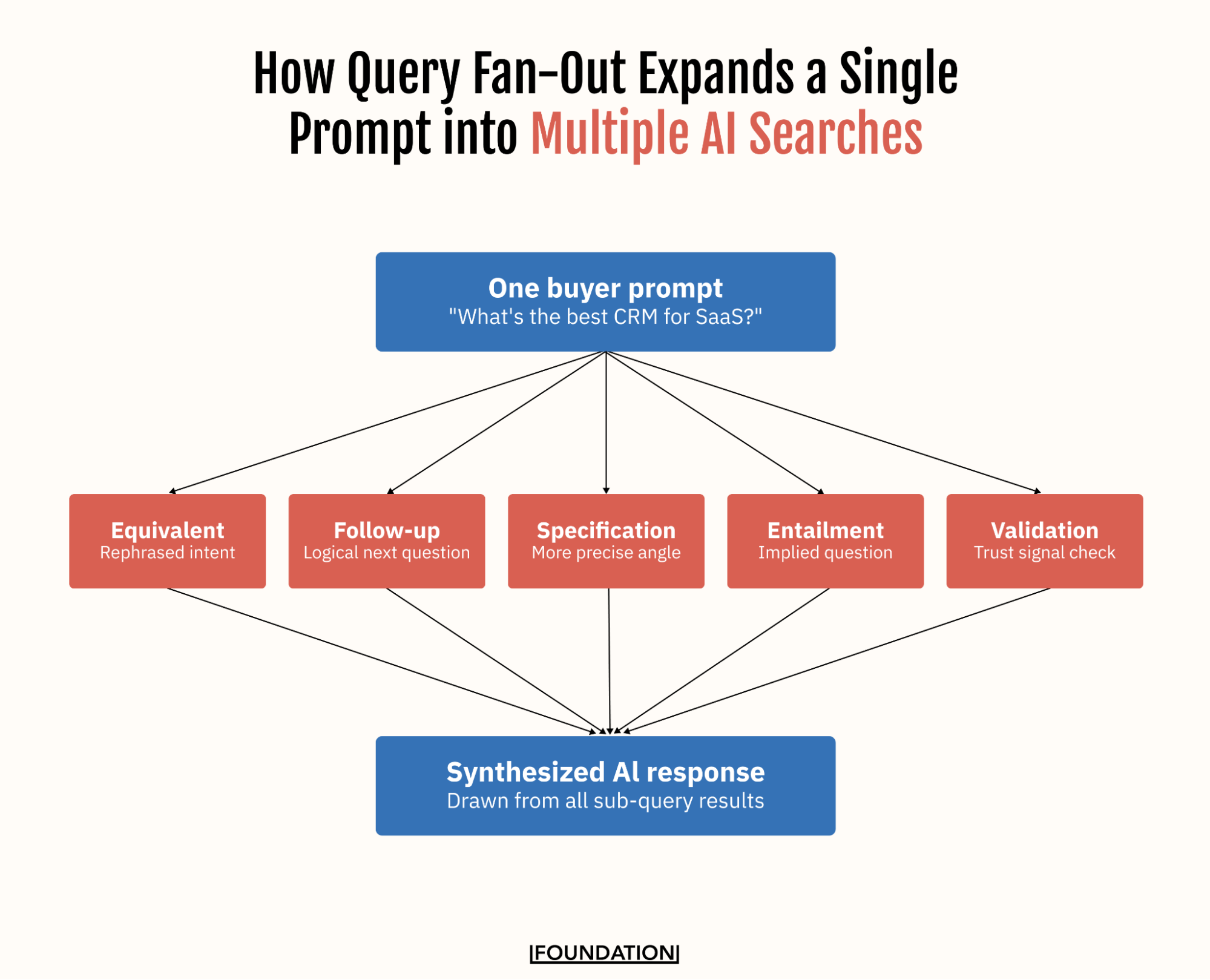

Query fan-out is the process by which AI search systems (ChatGPT, Google AI Mode, Perplexity, and others) take a single user prompt and automatically expand it into multiple related sub-queries before synthesizing a response.

Elizabeth Reid, Google VP and Head of Search, outlined the value of the query fan-out technique in AI search during the AI Mode launch in 2025:

“[It’s] breaking down your question into subtopics and issuing a multitude of queries simultaneously on your behalf. This enables Search to dive deeper into the web than a traditional search on Google.”

To understand why query fan outs are so crucial to AI visibility and generative engine optimization (GEO), it helps to see how search has evolved. Ahref’s Despina Gavoyannis explains the shift perfectly:

- Traditional search worked one-to-one: one query returned one results set matching that phrase.

- Next came many-to-one: search engines were updated so similar queries (content marketing agency and content marketing firm) could be satisfied by the same pages.

- AI search works one-to-many: One query expands into dozens of sub-queries the AI runs simultaneously on their behalf, and the final response is synthesized from everything it finds across all of them.

In other words, when someone asks “what’s the best CRM for a mid-market SaaS company?”, the AI doesn’t just search for that particular phrase in isolation or show results to similar queries. It expands the search to include CRM pricing comparisons, integration capabilities, reviews from similar-sized companies, implementation timelines, analyst rankings, and more — all at once. That structural shift changes what it means to be visible in search.

The number of fan-out queries generated by a prompt varies depending on the model and query type. Recent research from Nectiv puts the average number of fan-outs at about 10 per prompt, but complex queries or deep research prompts that number can go significantly higher.

The practical consequence: your buyers’ “one search” is actually dozens of searches happening in parallel, and your content is (hopefully) being evaluated across all of them.

Under the Hood: How Google’s Query Fan-Out System Actually Works

While the “AI generates multiple sub-queries” explanation is accurate, it undersells how sophisticated the system actually is. Google’s own patent filing, US11663201B2 — Generating query variants using a trained generative model, describes the full architecture, and it has direct implications for how B2B brands should think about content.

Most important: there are eight distinct types of fan-out queries. Google’s system not only rephrases your query, but it generates different variants depending on what information it needs:

| Query Type | Description | Example |

| Equivalent query | Restates the original in different words. | “Best content marketing agency for SaaS” → “Top B2B content marketing firms for software companies” |

| Follow-up query | Poses the logical next question. | “What does a content marketing agency do?” → “How long does it take to see results from a content marketing agency?” |

| Generalization query | Zooms out to a broader parent topic. | “Content marketing agency for fintech startups” → “B2B marketing agencies for financial services” |

| Canonicalization query | Converts informal or ambiguous phrasing into standardized search terms. | “Content guys for our SaaS” → “B2B SaaS content marketing services” |

| Language translation query | Translates the query to surface content across multilingual sources. | Relevant for B2B brands selling into non-English speaking markets. |

| Entailment query | Pursues what’s logically implied by the original. | “Should we hire a content marketing agency?” → “What is the ROI of outsourcing B2B content marketing?” |

| Specification query | Drills down into a more precise angle. | “Content marketing agency” → “Content marketing agency for Series B SaaS companies with under 100 employees” |

| Clarification query | Resolves ambiguous intent before proceeding. | “Best agency for content” → system asks whether the user means SEO content, thought leadership, social content, or video, then fans out accordingly. |

Each of these query types has different content format expectations. For instance, a follow-up query might reward a FAQ page or a dedicated “what to expect” guide, while a specification query might reward a case study filtered by company stage or industry. An entailment query might reward an ROI calculator or a benchmarks post. In other words, the query fan-out system significantly expands the search surface to find content that satisfies the specific type of sub-queries it generates.

Looking deeper into the Google patent, subsequent research on AI search function, and the AI Mode roll-out provide even more insight into how the query fan-outs work:

The system is iterative, not one-shot.

The patent describes a continuous generation loop where a “trained control model” evaluates the quality of what’s been found so far and decides whether to keep searching. If the results for one set of sub-queries are thin or conflicting, the system generates another round, dynamically determining how many iterations to run based on the quality of what it’s already found.

The patent filing makes the structural logic even more explicit. The system generates different types of sub-queries depending on what information gap it’s trying to close:

- Zooming out to a broader topic

- Drilling down into a more specific angle

- Pursuing what’s logically implied

- Resolving ambiguity before proceeding

Each type is designed to fill a different piece of the picture. The result isn’t a single search. It’s a structured research process running in parallel across multiple angles simultaneously.

The implication here is important: a single high-quality page won’t necessarily satisfy the system. The control model is looking for sufficient quality and coverage across the full retrieval set. If your content answers the core question but leaves gaps in the surrounding sub-queries, the loop continues (and finds someone else).

Reciprocal rank fusion determines what to cite.

After all the fan-out queries have run and returned results, the system doesn’t just pick the top-ranked page from a single query. It uses a scoring method called reciprocal rank fusion (RRF) to merge all the result lists into one unified ranking.

Each document gets scored based on its position across multiple query result lists. For example, a page ranking #2 for one sub-query and #5 for another accumulates a combined score. Documents that appear consistently across multiple fan-out queries score higher than those that appear in only one, even if that one ranking is strong.

RRF is why appearing across multiple sub-queries matters can be more important than ranking #1 for the core query alone.

User context shapes fan-outs

Google’s query fan-out mechanism now factors in a rich set of user attributes when generating variants. The AI Mode announcement confirmed that personal context factors into the process, including:

- User location

- Time of day

- Professional background

- Recent search behavior

- Past queries in the current session

With user permission, AI Mode can even use connected apps like Gmail to personalize results. This explains some of the variability between fan-outs and AI buyer journeys in general. Someone who’s been researching content marketing ROI will get a different fan-out than someone starting cold, even on the same query.

Fan-out extends to transactional queries.

Google’s AI Mode announcement explicitly described the system kicking off a query fan-out to “look across sites to analyze hundreds of potential ticket options with real-time pricing and inventory” for purchase queries.

For B2B brands, this is a signal of where things are heading: B2B buyers use AI to research, evaluate, and shortlist vendors in the same session. The brands that have built coverage across the full research-to-decision journey will have a structural advantage as AI search becomes the default interface for B2B procurement research.

The broader takeaway from the technical architecture is this: the AI is not a passive retriever. It’s an active researcher with a structured process for generating questions, evaluating answers, and deciding when it has enough to proceed.

Now let’s look at some recent data on the structure of query fan-outs across leading AI models.

What the Data Says About Fan-Out Queries (And What That Means for Your Content)

What that looks like in practice depends heavily on query intent. Recent analysis of over 43,000 total queries by AirOps Head of Research, Oshen Davidson, found that ChatGPT generated two or more fan-out queries on 89.6% of searches — and the structure of those follow-up searches varied significantly depending on what the user was trying to accomplish.

For informational queries, ChatGPT tends to stay close to the original phrasing. Definition queries remain near-verbatim 51.6% of the time (the highest rate of any query type), while how-to queries followed at 42.6%, most often adding category or use-case context like a specific tool or scenario.

Commercial queries told a different story. Rather than lightly rewriting the original search, ChatGPT more often broke the question into component-level sub-queries:

- Comparison queries showed the highest rate of decomposition at 38.4%. A query like “HubSpot vs. Salesforce” became separate searches for pricing, features, and reviews rather than a single head-to-head lookup.

- Evaluation queries split into sub-queries 37.5% of the time, branching into follow-ups around benefits, limitations, and use cases.

- Validation queries — the kind buyers use late in a decision cycle — stayed near-verbatim 40.6% of the time, which means your product-specific content needs to match the precise phrasing a buyer in evaluation mode is likely to use.

The content strategy implication is direct. A pillar page on your product category should connect to cluster pages covering pricing, comparisons, use cases, and methodology — because those are exactly the subtopics ChatGPT is designed to decompose and search independently. Every piece of content needs to be understood as part of a topic ecosystem, not a standalone asset.

What Query Fan Out Means for B2B Marketing Teams

B2B marketing teams are well-versed in implementing and monitoring SEO strategies. They track rankings for target terms, build content clusters around pillar pages, and monitor organic traffic as a proxy for content effectiveness. It’s a sensible strategy — and it remains important.

But query fan-out creates a fundamentally different search surface, and traditional keyword tools can’t see it. The AirOps data shows why this is a difficult situation for marketing teams:

1. Your Keyword Tools Are Missing Most of the Search Surface

95% of ChatGPT’s fan-out queries had zero monthly search volume by conventional tracking standards. These are AI-generated searches, not recurring human queries — they won’t appear in Ahrefs or Search Console. If your content covers the core topic but misses the surrounding sub-questions, you’re competing for only a fraction of the available citation opportunity.

2. Getting Found Is Only Half the Battle

85% of all pages retrieved by ChatGPT across 548,534 pages were never cited in a final response. Pages with strong title-to-query alignment were cited at more than twice the rate of poorly aligned pages, and those ranking #1 in Google were cited 3.5x more often than pages outside the top 20.

3. Topical Depth Beats Domain Authority

High-authority sites (DA 80–100) had the lowest citation rate of any tier despite being frequently retrieved . The AI pulled them in but didn’t end up using them. Depth of coverage on a specific subtopic matters more than overall domain authority.

The big takeaway here: stop asking “what do we want to rank for?” and start asking “what does the AI need to know to confidently recommend us?” That means auditing for coverage gaps across the full constellation of sub-queries your buyers trigger (pricing, comparisons, use cases, trust signals) not just your primary target terms.

What B2B Marketing Teams Should Do Differently

Understanding the fan-out problem is one thing, closing that gap is another. The goal is to expand on your existing SEO and content strategy by adding a fan-out dimension to it.

These five shifts give you a practical starting point.

- Map your priority topics by fan-out pattern. For each core topic you’re pursuing (your product category, methodology, or buyers’ core pain points) identify what an AI would realistically search for when answering a question about it. What sub-questions would emerge? What comparison angles? What trust signals would it look for?

- Audit for coverage gaps, not just keyword gaps. Traditional content audits assess whether you’re ranking for target terms. A fan-out audit goes further, assessing whether you’re visible across the full constellation of sub-queries the AI uses to build its answer and surfaces the kind of gaps that simply won’t surface in a conventional keyword analysis.

- Prioritize Google performance for your highest-value topics. Google rankings remain a meaningful input into AI citation selection, with pages in the top 20 appearing in citation pools at 3.5x higher rates than those outside it. The leverage point is moving your highest-intent pages closer to the top of the SERP where the citation advantage is strongest.

- Invest in off-site visibility for trust-heavy queries. Many fan-out queries are trust signal searches, where the AI looks for third-party validation before recommending a brand. Review sites, industry publications, comparison platforms, and community discussions are all part of the fan-out citation surface. A strategy that treats owned content as the entire game will miss these queries entirely.

- Shift from keyword rankings to topic ecosystems. Stop asking “what do we want to rank for?” Start asking “what does the AI need to know to confidently recommend us?” The brands that accumulate citations across every sub-query that matters to their buyers are the ones who have built deep, interconnected content across the full topic landscape of their buyers research.

None of these shifts require starting from scratch. The content you’ve already built, the rankings you’ve already earned, and the topic clusters you’ve already mapped are all still working for you. Query fan-out just raises the stakes for what comes next — and the teams that understand it earliest will have a compounding advantage over those still optimizing for a single point of visibility in a single results set.

The Question Every B2B Marketing Team Needs to Start Asking

The old question for marketing teams was: what do we want to rank for? That question optimizes for a single point of visibility in a single results set.

The new question is: what does the AI need to know to confidently recommend us? That question optimizes for presence across the full research process a buyer’s AI platform runs on their behalf — the core query, the follow-up questions, the trust signal checks, the comparison lookups, the specification searches that narrow the field.

The brands that answer that second question, and build content ecosystems that reflect it, are the ones accumulating citations across every sub-query that matters to their buyers.

If you’re ready to build your content ecosystem today, get in touch with the leading generative engine optimization agency.